According to Boltzmann, if it approaches a neighbor molecule it is repelled by it, but if it moves farther away there is an attraction. This correlation occurs because the numbers of different microscopic quantum energy states available to an ordered system are usually much smaller than the number of states available to a system that appears to be disordered.įrom his famous 1896 Lectures on Gas Theory, Boltzmann diagrams the structure of a solid body, as shown above, by postulating that each molecule in the body has a "rest position". Moreover, according to the third law of thermodynamics, at absolute zero temperature, crystalline structures are approximated to have perfect "order" and zero entropy. In solids, for example, which are typically ordered on the molecular scale, usually have smaller entropy than liquids, and liquids have smaller entropy than gases and colder gases have smaller entropy than hotter gases. Owing to these early developments, the typical example of entropy change Δ S is that associated with phase change. If an organism was in this type of “isolated” situation, its entropy would increase markedly as the once-living components of the organism decayed to an unrecognizable mass. Unlike temperature, the putative entropy of a living system would drastically change if the organism were thermodynamically isolated.

The conditioner of this statement suffices that living systems are open systems in which both heat, mass, and or work may transfer into or out of the system. This local increase in order is, however, only possible at the expense of an entropy increase in the surroundings here more disorder must be created. solar heating action, and that this applies to machines, such as a refrigerator, where the entropy in the cold chamber is being reduced, to growing crystals, and to living organisms. The common argument used to explain this is that, locally, entropy can be lowered by external action, e.g. Yet all around us we see magnificent structures-galaxies, cells, ecosystems, human beings-that have all somehow managed to assemble themselves.” The laws of thermodynamics seem to dictate the opposite, that nature should inexorably degenerate toward a state of greater disorder, greater entropy. In the recent 2003 book SYNC – the Emerging Science of Spontaneous Order by Steven Strogatz, for example, we find “Scientists have often been baffled by the existence of spontaneous order in the universe. Thus, if entropy is associated with disorder and if the entropy of the universe is headed towards maximal entropy, then many are often puzzled as to the nature of the "ordering" process and operation of evolution in relation to Clausius' most famous version of the second law, which states that the universe is headed towards maximal “disorder”. The entropy of the universe tends to a maximum.

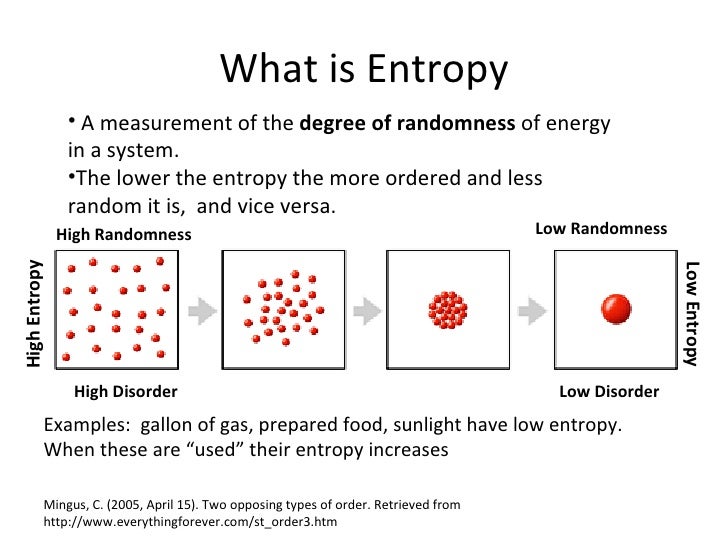

The relationship between entropy, order, and disorder in the Boltzmann equation is so clear among physicists that according to the views of thermodynamic ecologists Sven Jorgensen and Yuri Svirezhev, “it is obvious that entropy is a measure of order or, most likely, disorder in the system.” In this direction, the second law of thermodynamics, as famously enunciated by Rudolf Clausius in 1865, states that: If you have more than one particle, or define states as being further locational subdivisions of the box, the entropy is larger because the number of states is greater. What is the probability that a certain number, or all of the particles, will be found in one section versus the other when the particles are randomly allocated to different places within the box? If you only have one particle, then that system of one particle can subsist in two states, one side of the box versus the other. As an example, consider a box that is divided into two sections. This stems from Rudolf Clausius' 1862 assertion that any thermodynamic process always "admits to being reduced to the alteration in some way or another of the arrangement of the constituent parts of the working body" and that internal work associated with these alterations is quantified energetically by a measure of "entropy" change, according to the following differential expression: ∫ δ Q T ≥ 0, which relates entropy S to the number of possible states W in which a system can be found. In thermodynamics, entropy is often associated with the amount of order or disorder in a thermodynamic system.

Interpretation of entropy as the change in arrangement of a system's particles Boltzmann's molecules (1896) shown at a "rest position" in a solid

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed